iOS 17 expands protection against unsolicited pictures to all iPhone users – Times of India

Apple‘s Communication Safety feature, intended to safeguard children from accessing explicit images on iMessage, will now be extended to cover adult users and other forms of communication, including videos.

What is Communication Safety feature

The Communication Safety in Messages feature uses on-device machine learning to automatically blur unsolicited images in iMessages, so the same will be used for videos as well. Apple says that all the image and video processing will be performed on the device, ensuring privacy that even the company could not access.

As of now, the Communication Safety tool is only available for minors as an opt-in feature available in Apple’s Family Sharing system. With this feature enabled, it can identify any images that may contain nudity when a child is sending or receiving them. If detected, the child is notified and the image is blurred before it is viewed on their device. Additionally, the child is provided with useful resources and the option to message a trusted adult for further assistance.

With iOS 17, the Communication Safety will help safeguard children from accessing or sharing photos with nudity through AirDrop, new Contact Posters, FaceTime messages, and when browsing their photo library using Photo Picker. This feature also extends to video content, where it can detect nudity.

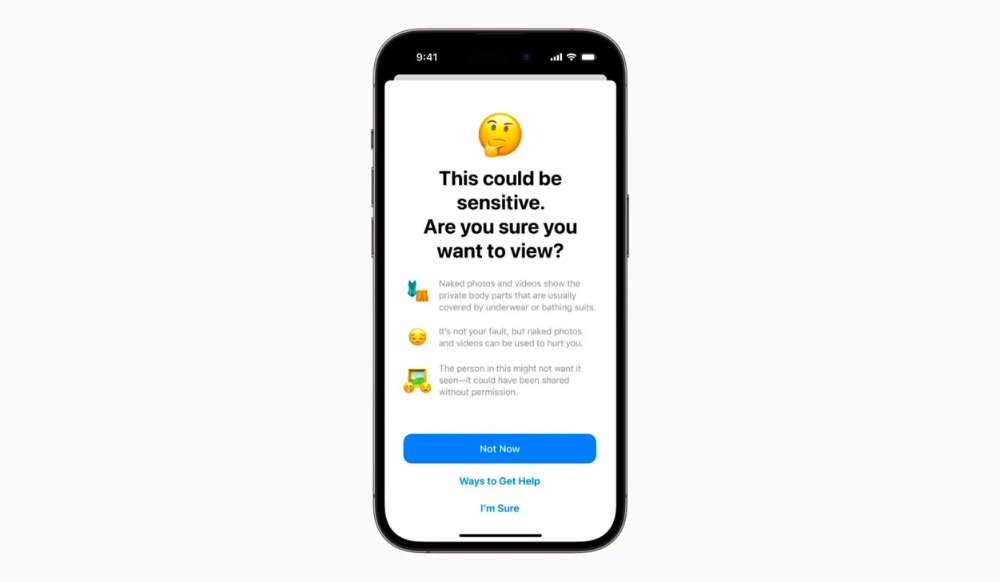

The “Sensitive Content Warning” protects all users, whether adults or minors, from receiving unsolicited pictures.

If an image or video contains nudity, a pop-up message will appear, warning the user, regardless of their age, reading,“Naked photos and videos show the private body parts that are usually covered by underwear or bathing suits,” the pop-up explains. “It’s not your fault, but naked photos and videos can be used to hurt you.” The message will ask if they want to view the content, but will also offer reassurance and helpful guidance on staying safe.

function loadGtagEvents(isGoogleCampaignActive) { if (!isGoogleCampaignActive) { return; } var id = document.getElementById('toi-plus-google-campaign'); if (id) { return; } (function(f, b, e, v, n, t, s) { t = b.createElement(e); t.async = !0; t.defer = !0; t.src = v; t.id = 'toi-plus-google-campaign'; s = b.getElementsByTagName(e)[0]; s.parentNode.insertBefore(t, s); })(f, b, e, 'https://www.googletagmanager.com/gtag/js?id=AW-877820074', n, t, s); };

window.TimesApps = window.TimesApps || {}; var TimesApps = window.TimesApps; TimesApps.toiPlusEvents = function(config) { var isConfigAvailable = "toiplus_site_settings" in f && "isFBCampaignActive" in f.toiplus_site_settings && "isGoogleCampaignActive" in f.toiplus_site_settings; var isPrimeUser = window.isPrime; if (isConfigAvailable && !isPrimeUser) { loadGtagEvents(f.toiplus_site_settings.isGoogleCampaignActive); loadFBEvents(f.toiplus_site_settings.isFBCampaignActive); } else { var JarvisUrl="https://jarvis.indiatimes.com/v1/feeds/toi_plus/site_settings/643526e21443833f0c454615?db_env=published"; window.getFromClient(JarvisUrl, function(config){ if (config) { loadGtagEvents(config?.isGoogleCampaignActive); loadFBEvents(config?.isFBCampaignActive); } }) } }; })( window, document, 'script', );

For all the latest Technology News Click Here